Mythos vs. GPT-5.5

Comparing deployment strategies and evaluation results

Two cyber capable models were announced in the past few weeks: Anthropic’s Claude Mythos and OpenAI’s GPT-5.5. I say announced because “released” would be inaccurate, as Mythos access was granted to only a few trusted users, while GPT-5.5 was shipped broadly, albeit with safeguards.

Which brings up the following questions:

If both models are similarly cyber-capable, why is there such a large difference in deployment strategies?

Which strategy is best for enabling defenders?

This post discusses my current best guesses to answers to these questions.

Bottom line up front: The deployment strategy divergence can be explained by Mythos being much better than evals capture and by OpenAI’s iterative-deployment philosophy. And second, counterintuitively, gating model access might be actually better for defenders.

Deployment strategies

Below is a summary of the current deployment strategies taken by Anthropic (for Mythos and Claude Opus 4.7) and by OpenAI (for GPT-5.5).

Anthropic

Mythos is not generally available. Anthropic restricted access to vetted industry and open-source partners through Project Glasswing.

Launch partners include AWS, Cisco, CrowdStrike, Google, JPMorganChase, Linux Foundation, Microsoft, and others.

Anthropic committed $100M in usage credits and $4M in donations to open-source security organizations

Early access partners’ prompts are still run through classifiers, but triggers are used for monitoring and detection, not blocking. For general-release models with strong cyber capabilities, the plan is to “block prohibited uses, and in many or most cases, block high risk dual use prompts as well” (Mythos System Card , §3.2).

Vulnerabilities Mythos discovers are not immediately public: they go through coordinated disclosure on a 90+45 day window, giving maintainers time to patch before discoveries become attack surface. Contracted professional triage before maintainer notification (Mythos Preview blog.)

The safeguards that shipped with Claude Opus 4.7 seem to be a test-run for the safeguards intended for when Mythos ships:

Same three-category probes; for general-access customers, prohibited use (e.g. worm development) and high-risk dual-use (e.g. exploit development) are now blocked by default. Dual-use (e.g. vulnerability detection) is not blocked.

The Cyber Verification Program is live: practitioners with legitimate dual-use needs can apply for exemption from the high-risk dual-use blocks (Opus 4.7 System Card, §3.2).

Additionally, Anthropic nerfed Opus 4.7’s cyber capability, holding held roughly at Opus 4.6 levels through training-time efforts to “differentially reduce these capabilities” (Opus 4.7 system card §3.1).

OpenAI

GPT-5.5 is generally available with a layered safeguard stack. Stated strategy: “disrupting and adding friction for threat actors while accelerating defenders, especially through our trusted access program” (GPT-5.5 system card, §9.3.2).

Layer 1: model safety training. The model is trained to refuse requests enabling unauthorized or destructive actions (malware deployment, credential theft, exfiltration).

Layer 2: real-time conversation monitor (two tiers):

Tier 1: a fast topical classifier flags whether content is cybersecurity-related.

Tier 2: a safety reasoner classifies the response against OpenAI’s cyber threat taxonomy and blocks high-risk content.

For 5.5 specifically: “additionally restrict frontier, dual-use assistance such as scaled agentic vulnerability research and chained exploit development for users outside of our trusted access program” (§9.3.2.3).

Layer 3: actor-level enforcement. Cyber-risk thresholds trigger automated review and, in some cases, manual human review. Responses include added monitoring, more restrictive blocking configurations, prompts to apply for Trusted Access for Cyber, capability restriction, account suspension, or ban. (§9.3.2.4).

Trusted Access for Cyber (TAC). Identity-gated pathway giving verified enterprise defenders, asset owners, CERTs, and specialized security teams access to higher-risk dual-use cyber capabilities (§9.3.2.5).

Evaluations

The deployment strategies for Claude Mythos and GPT-5.5 are very different1: the former is held to just a few trusted partners, while the latter is publicly released with safeguards. Could this be explained by Mythos being a much more powerful model, and if so, can we see this from the publicly disclosed evaluation results?

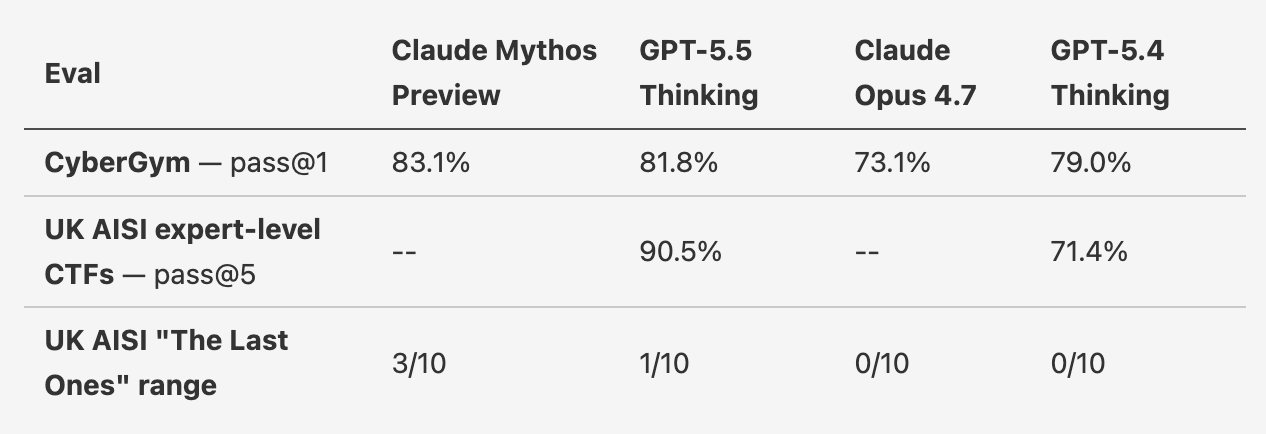

Here are the evaluation numbers I was able to scrape together that are directly comparable between GPT-5.5 and Mythos.

In the GPT-5.5 system card, OpenAI writes “[GPT 5.5] was the highest-performing model UK AISI has tested in terms of pass@5.” In a UK AISI blog post, the authors report a 73% success rate for Mythos, with the figure caption reading that the results “average 5 runs up to 50M tokens,” which is not pass@5. So those two numbers are not directly comparable.

In terms of the scant evals where we can actually compare, Mythos looks a tiny bit better than GPT-5.5, but this is just not enough information to say that 5.5 is “as good” as Mythos or that Mythos is really significantly better on dual-use cyber tasks. Evals are difficult to design such that they capture the aspects of model capabilities we truly care about, so I do put weight on other indicators, such as real-world impact. How much better is Mythos than 5.5, truly, is hard to say until both models are launched and people are using them.

Why are these strategies so different? Who is making a better choice?

Anthropic’s strategy makes sense if Mythos is actually a step-change and meaningfully better — which we can’t infer from the evals. I do think Anthropic believes that Mythos is a step-change and that it poses a genuine risk to the world. The strategy probably makes sense if Mythos is, in a way that’s not captured in evals, meaningfully better.

OpenAI, on the other hand, would argue that its important to make frontier cyber capabilities “as widely available as possible while preventing misuse” to enable the long tail of cyber defenders. This strategy makes sense if the model is an incremental improvement (catastrophic risk ruled out) and if their classifiers and monitoring system is truly robust.

Another reason the strategies differ is institutional. OpenAI’s stated approach to advanced AI is iterative deployment. They “think the best way to successfully navigate AI deployment challenges is with a tight feedback loop of rapid learning and careful iteration.” The GPT-5.5 cyber strategy is that philosophy applied. Anthropic’s posture is different: they commit to delaying deployment unless they have “strong argument that catastrophic risk is contained” (Anthropic RSP v3.0, p.16). Holding Mythos behind Glasswing is what that posture looks like in practice.

Model capabilities and safeguards aside, there is another factor to consider: which strategy drives true defender adoption? Cyber defenders would rather sit on their laurels and reap the benefits of technology they built 10 or 20 years ago than meaningfully adopt any frontier AI model. So how do you convince them?

Glasswing all but shoves frontier AI down their throats. It makes critical cyber defenders feel special by making them part of an elite early group and creating a sense of urgency. Although OpenAI, in theory, covers the long tail of defenders, I don’t think defenders will willingly sign up. From personal experience2, I know that defenders don’t want to depend on a frontier lab. They’d rather convince themselves that a model they fine-tune in-house is just as good. Glasswing is a great way to hand them the frontier tools.

If anything, Glasswing doesn’t go far enough. Offering free tokens to a special secret model is one thing, but getting defenders to use it effectively is another. As of today, talent is still required to use AI tools effectively, and that talent isn’t evenly distributed. The best engineers at Anthropic or OpenAI are much more uplifted by AI than an average engineer at Cisco, J.P. Morgan, or Microsoft — both due to talent but also due to organizational red tape. More could be done by the frontier labs to help with integration of AI tools into large companies, e.g. by hiring who sit with defenders, teach them the tools, and clear the organizational blockers that keep models like Mythos out of production.

In the case of cyber defense, you must shove the horse’s face into the water. The horse must drink, because if he doesn’t, neither of you are making it home.

Appendix: Evals Sources

Mythos CyberGym 83.1%

GPT-5.5 expert pass@5 90.5% — §9.1.2.7 (p.33).

GPT-5.4 expert pass@5 71.4% — §9.1.2.7 (p.33).

GPT-5.5 TLO 1/10 — §9.1.2.7 (”1/10 attempts”).

GPT-5.4 TLO 0/10 — §9.1.2.7 states GPT-5.4 “did not successfully complete this range.”

Opus 4.7 TLO 0/10 — §3.4: “[Opus 4.7] was unable to fully solve the cyber range.”

Mythos TLO 3/10 — “3 out of its 10 attempts”.

Mythos expert-level CTFs— “succeeds 73% of the time”.

OpenAI launch comparison chart

GPT-5.5 CyberGym 81.8%

Opus 4.7 CyberGym 73.1% (also found on Anthropic’s tweet I believe)

GPT-5.4 CyberGym 79.0%

The Claude Opus 4.7 and GPT-5.5 deployment strategies are essentially the same, which would, on the surface, make sense if 4.7 and 5.5 were in the same class of capabilities, with Mythos being in a class of its own.

In a former life, I was an AI Security Researcher at one of the Glasswing partner firms.